実験によく使用されているマウス達。

喜び、悲しみ、恐怖などの感情で、彼らは表情を変えているのだろうか。

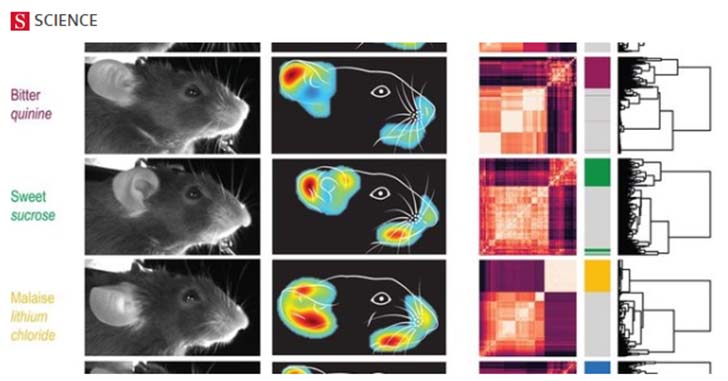

ドイツにあるMax Planck Institute of NeurobiologyのGogollaらの研究グループは、マウスに様々な刺激に対してその表情を変化させることを明らかにした。

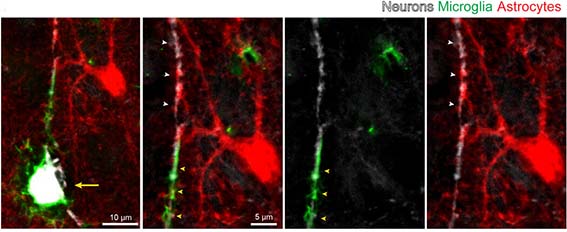

The neuroscientific investigation of emotions is hindered by a lack of rapid and precise readouts of emotion states in model organisms. Dolensek et al. identified facial expressions as innate and sensitive reflections of the internal emotion state in mice (see the Perspective by Girard and Bellone). Mouse facial expressions evoked by diverse stimuli could be classified into emotionlike categories, similar to basic emotions in humans. Machine-learning algorithms categorized mouse facial expressions objectively and quantitatively at millisecond time scales. Intensity, value, and persistence of subjective emotion states could thus be decoded in individual animals. Combining facial expression analysis with two-photon calcium imaging allowed the identification of single neurons whose activity closely correlated with specific facial expressions in the insular cortex, a brain region implicated in affective experiences in humans. Science , this issue p. [89][1]; see also p. [33][2] Understanding the neurobiological underpinnings of emotion relies on objective readouts of the emotional state of an individual, which remains a major challenge especially in animal models. We found that mice exhibit stereotyped facial expressions in response to emotionally salient events, as well as upon targeted manipulations in emotion-relevant neuronal circuits. Facial expressions were classified into distinct categories using machine learning and reflected the changing intrinsic value of the same sensory stimulus encountered under different homeostatic or affective conditions. Facial expressions revealed emotion features such as intensity, valence, and persistence. Two-photon imaging uncovered insular cortical neuron activity that correlated with specific facial expressions and may encode distinct emotions. Facial expressions thus provide a means to infer emotion states and their neuronal correlates in mice. [1]: /lookup/doi/10.1126/science.aaz9468 [2]: /lookup/doi/10.1126/science.abb2796

マウスにも表情がある模様

彼らは、マウスの表情を見分けるアルゴリズムを作製し、様々な感情(プレッシャー、不快、吐き気、痛み、恐怖)は表情で識別可能であるとした。

2光子顕微鏡のライブイメージングでは、それぞれの感情に沿って島回皮質の神経活動が変化する様子をとらえた。

References

- Nejc Dolensek, Daniel A. Gehrlach, Alexandra S. Klein, Nadine Gogolla. Facial expressions of emotion states and their neuronal correlates in mice. Science, 2020 doi: 10.1126/science.aaz9468

- Max-Planck-Gesellschaft. "The facial expressions of mice: The face of a mouse reveals its emotions." ScienceDaily. ScienceDaily, 2 April 2020. <www.sciencedaily.com/releases/2020/04/200402144430.htm>.